PerformanceCollector and the Heisenberg Uncertainty Group

This is the last post in my series on my PerformanceCollector tool. This post is a warning and an anecdote that you can over-monitor your server. By default PerformanceCollector (PC) monitors quite a bit of information but I've had the utility running on high-throughput production OLTP workloads and I could not discern any negative performance impacts by running it using the default configuration. It has all of the necessary fail-safes in place (I hope) to ensure it doesn't roll off the rails. For instance, there is a configuration setting that will cap the size of the database and will auto-purge data if needed.

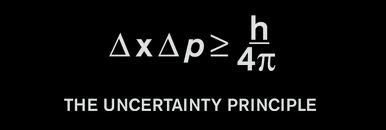

The Heisenberg Uncertainty Principle may eventually come into play however and you should be aware of that. If you are not familiar, the HUP basically says that the more you monitor something, the more the act of monitoring will adversely impact the system. You may also hear this called the "observer effect".

The Heisenberg Uncertainty Principle may eventually come into play however and you should be aware of that. If you are not familiar, the HUP basically says that the more you monitor something, the more the act of monitoring will adversely impact the system. You may also hear this called the "observer effect".

If you find that PC is a bit too heavyweight for your environment then you can disable some of the AddIns or extend the interval between runs. Or you can tweak the code to fit your needs. The possibilities are endless. In older versions of the tool I ran into some cases where the system was under such stress that PC could not accurately log the waiting and blocking of the system. That kinda renders the tool useless when you are trying to diagnose severe performance problems. But I haven't had that happen in a few years now so either my code is getting better, or M$ has improved the performance of their DMVs to work under harsh environments. I assume the latter.

But you can take performance monitoring too far. Here's a quick story to illustrate the fact.

I consulted at a company that had deployed 2 third party performance monitoring tools that shall remain nameless. They worked ok but did not capture the wait and subsystem information like PC does so the client decided to deploy my scripts as well.

There were now 3 tools running.

PC only collects waits and blocks every 15 seconds and it's likely it won't capture EVERY "performance event" that occurs during that interval. But again, I'm worried about HUP.

Primum non nocere.

Like any good doctor, "first, do no harm."

Frankly, users are generally tolerant of an occassional performance blip, but anything over 15 seconds and rest assured some user will open up a help desk ticket. That is why the monitoring interval is 15 seconds. But this interval is a bit like Prunes Analysis (when taking prunes...are 3 enough or are 4 too many?). You can certainly make the case that every 10 seconds is better, or that every minute is optimal. Decisions like this are very experiential.

Frankly, users are generally tolerant of an occassional performance blip, but anything over 15 seconds and rest assured some user will open up a help desk ticket. That is why the monitoring interval is 15 seconds. But this interval is a bit like Prunes Analysis (when taking prunes...are 3 enough or are 4 too many?). You can certainly make the case that every 10 seconds is better, or that every minute is optimal. Decisions like this are very experiential.

This particular client decided that with a 15 second interval too much valuable information was not being logged. They decided that if a block or wait occurred the 15 second monitoring interval would be shrunk to every second to get even better metrics. This required one of their developers to change the WHILE 1=1 loop in PC. If after 60 seconds of monitor at the one-second interval no blocking/waiting was observed, then the code reverted back to every 15 seconds.

IMHO, that's far too much logging. It is quite normal for a busy system to constantly experience some variant of waiting. That meant that this new code was ALWAYS running in one second interval mode. This hyper-logging mode was implemented after I left the client and they did it without consulting me, or really consulting anyone that understands a modicum of performance monitoring a SQL Server.

It didn't take long until performance of the system was worse than ever. At one point the instance was crashing every few days due to tempdb log space issues. Further, PerformanceCollector did no have all of the fail-safes at the time and the db was about 1TB in size, even with 30 day purging in place. Another performance consultant was brought in (I was out-of-pocket) and he quickly determined that PC was the biggest consumer of tempdb and IO resources on the instance. I looked thoroughly stupid when I was called back in to the client to explain myself. I quickly determined that my code was not at fault, rather the change to shorten monitoring interval and increase the frequency of monitoring were the causes.

It didn't take long until performance of the system was worse than ever. At one point the instance was crashing every few days due to tempdb log space issues. Further, PerformanceCollector did no have all of the fail-safes at the time and the db was about 1TB in size, even with 30 day purging in place. Another performance consultant was brought in (I was out-of-pocket) and he quickly determined that PC was the biggest consumer of tempdb and IO resources on the instance. I looked thoroughly stupid when I was called back in to the client to explain myself. I quickly determined that my code was not at fault, rather the change to shorten monitoring interval and increase the frequency of monitoring were the causes.

Without finger-pointing I explained that we should put my PC code back to the defaults and re-evaluate why hyper-logging was needed at all. The other consultant agreed and within a day or so everyone was calm and happy again. Mistakes like this happen.

As I was wrapping up this engagement I finally asked, "So, have you identified and corrected many issues that PC has found? Clearly if you thought hyper-logging was needed you must've corrected quite a few performance problems with the tool."

The DBA responded, pensively, "Well, we keep the trend data but we haven't opened up any tickets to the dev team to fix specific stored procs yet. We don't have any budget for a DBA to look at the missing indexes either. But maybe we'll get to do all of that some day."

The devs, architects, and I choked back the laughter. All of that monitoring and nothing to show for it. To this day the other architects at that client call the DBAs the HUGs (Heisenberg Uncertainty Group) behind their backs. I have since returned to that client for other engagements and I have witnessed this for myself. Those of us in the know chuckle at the joke. But the lead HUG didn't get the joke apparently…he got angry once and asked not to be compared with Walter White.

The devs, architects, and I choked back the laughter. All of that monitoring and nothing to show for it. To this day the other architects at that client call the DBAs the HUGs (Heisenberg Uncertainty Group) behind their backs. I have since returned to that client for other engagements and I have witnessed this for myself. Those of us in the know chuckle at the joke. But the lead HUG didn't get the joke apparently…he got angry once and asked not to be compared with Walter White.

Moral of the story, monitor as much as you need to, and nothing more. If you don't have the budget or resources to fix issues you find during your monitoring then it is best to consider NOT monitoring. No need to make your performance worse with too much monitoring. That will keep Heisenberg from rolling over in his grave.

You have just read [[PerformanceCollector and the Heisenberg Uncertainty Group]] on davewentzel.com. If you found this useful please feel free to subscribe to the RSS feed.

Dave Wentzel CONTENT

sql server data architecture performancecollector performance